Microsoft’s AI Red Team Has Already Made the Case for Itself

For most people, the idea of using artificial intelligence tools in daily life—or even just messing around with them—has only become mainstream in recent months, with new releases of generative AI tools from a slew of big tech companies and startups, like OpenAI’s ChatGPT and Google’s Bard. But behind the scenes, the technology has been proliferating for years, along with questions about how best to evaluate and secure these new AI systems. On Monday, Microsoft is revealing details about the team within the company that since 2018 has been tasked with figuring out how to attack AI platforms to reveal their weaknesses.

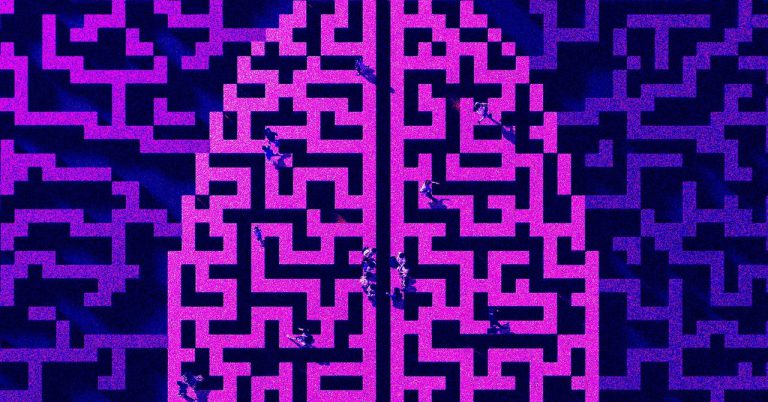

In the five years since its formation, Microsoft’s AI red team has grown from what was essentially an experiment into a full interdisciplinary team of machine learning experts, cybersecurity researchers, and even social engineers. The group works to communicate its findings within Microsoft and across the tech industry using the traditional parlance of digital security, so the ideas will be accessible rather than requiring specialized AI knowledge that many people and organizations don’t yet have. But in truth, the team has concluded that AI security has important conceptual differences from traditional digital defense, which require differences in how the AI red team approaches its work.

“When we started, the question was, ‘What are you fundamentally going to do that’s different? Why do we need an AI red team?’” says Ram Shankar Siva Kumar, the founder of Microsoft’s AI red team. “But if you look at AI red teaming as only traditional red teaming, and if you take only the security mindset, that may not be sufficient. We now have to recognize the responsible AI aspect, which is accountability of AI system failures—so generating offensive content, generating ungrounded content. That is the holy grail of AI red teaming. Not just looking at failures of security but also responsible AI failures.”

Shankar Siva Kumar says it took time to bring out this distinction and make the case that the AI red team’s mission would really have this dual focus. A lot of the early work related to releasing more traditional security tools like the 2020 Adversarial Machine Learning Threat Matrix, a collaboration between Microsoft, the nonprofit R&D group MITRE, and other researchers. That year, the group also released open source automation tools for AI security testing, known as Microsoft Counterfit. And in 2021, the red team published an additional AI security risk assessment framework.

Over time, though, the AI red team has been able to evolve and expand as the urgency of addressing machine learning flaws and failures becomes more apparent.

In one early operation, the red team assessed a Microsoft cloud deployment service that had a machine learning component. The team devised a way to launch a denial of service attack on other users of the cloud service by exploiting a flaw that allowed them to craft malicious requests to abuse the machine learning components and strategically create virtual machines, the emulated computer systems used in the cloud. By carefully placing virtual machines in key positions, the red team could launch “noisy neighbor” attacks on other cloud users, where the activity of one customer negatively impacts the performance for another customer.